The Problem With AI Resume Screening (And a Better Model)

You posted a job. Four hundred applications came in. Your ATS scored and ranked them. You opened the top fifty, worked through what time allowed, and moved on.

Somewhere in the pile you never got to was the person you would have hired.

The scoring wasn't broken. The model just stopped too soon.

AI resume screening tools typically score and rank applicants against a job description, which is a useful starting point but often causes teams to miss strong candidates who don't rise to the top on paper. A more complete approach treats application review as a navigation problem: using AI to explore your applicant pool after the initial score, adjust criteria as your thinking evolves, and surface candidates a ranked list alone would have buried. The goal isn't to automate the decision. It's to give recruiters a more flexible way to work through volume and find the people worth a closer look.

Application volume is rising, and the workload is rising with it

This isn't an isolated frustration. According to Elly's Q1 2026 research, 58% of recruiting teams report receiving more inbound candidates than they did before. And 43.5% say the result has been increased workload, not reduced effort.

AI screening tools are already widespread. Elly's Q4 2025 AI in Talent Acquisition report, based on a survey of 215 TA professionals, found that 60% of organizations already use AI for resume screening and review. The infrastructure is there. The relief, for many teams, isn't.

Part of the reason: having a ranked list doesn't solve the problem of working through it. Volume is still volume. And when the list is long, the candidates at the bottom often don't get looked at at all.

What AI scoring actually does well

To be clear: scoring and ranking applications against a job description is genuinely useful. It removes clearly unqualified candidates from the pool, creates a starting point for review, and helps lean recruiting teams prioritize where to spend time first.

Elly's AI-native ATS does this natively when need applications come in. So do most modern ATS tools and screening platforms. It's table stakes now, not a differentiator.

The problem isn't the scoring. It's what happens next.

The gap: a ranked list is a starting point, not a decision

Consider what a ranked list actually gives you. A static ordering based on how well each resume matches a fixed set of criteria, at the moment the job was posted.

That's useful. It's also just the beginning.

Even after a well-calibrated score runs, recruiters still face real work. A top tier of thirty or forty candidates who all scored well still needs to be worked through and doing that efficiently, without losing track of where you are or what you've seen, is its own challenge. A ranked list doesn't help you navigate that group. It just orders them.

What the score can miss

There's also the question of who didn't surface at all. Candidates with nontraditional backgrounds, unconventional resumes, or skills described in different language than the job description used may score lower without being weaker. A static ranking buries them, and there's no easy way to go back and find them unless you know to look.

And then there's criteria drift. Once a recruiter has reviewed twenty or thirty profiles, the picture often shifts. Maybe seniority matters less than the job description implied. Maybe agency experience turns out to be a better signal than in-house background for this particular role. A rigid scoring tool can't move with that. It ranked the pool against the original criteria and stopped.

In both cases, the tool treated the score as the finish line.

The navigation problem

Meg Gowell, Head of Marketing at Elly, described this clearly when the AI Application Review feature launched. In a past role, she found herself manually downloading resumes in batches of ten from her ATS because the scoring and filter system alone wasn't surfacing what she was actually looking for.

It wasn't a speed problem. It was a navigation problem.

She knew the kind of candidate she was looking for. The tool couldn't translate that into a query. So she did it by hand.

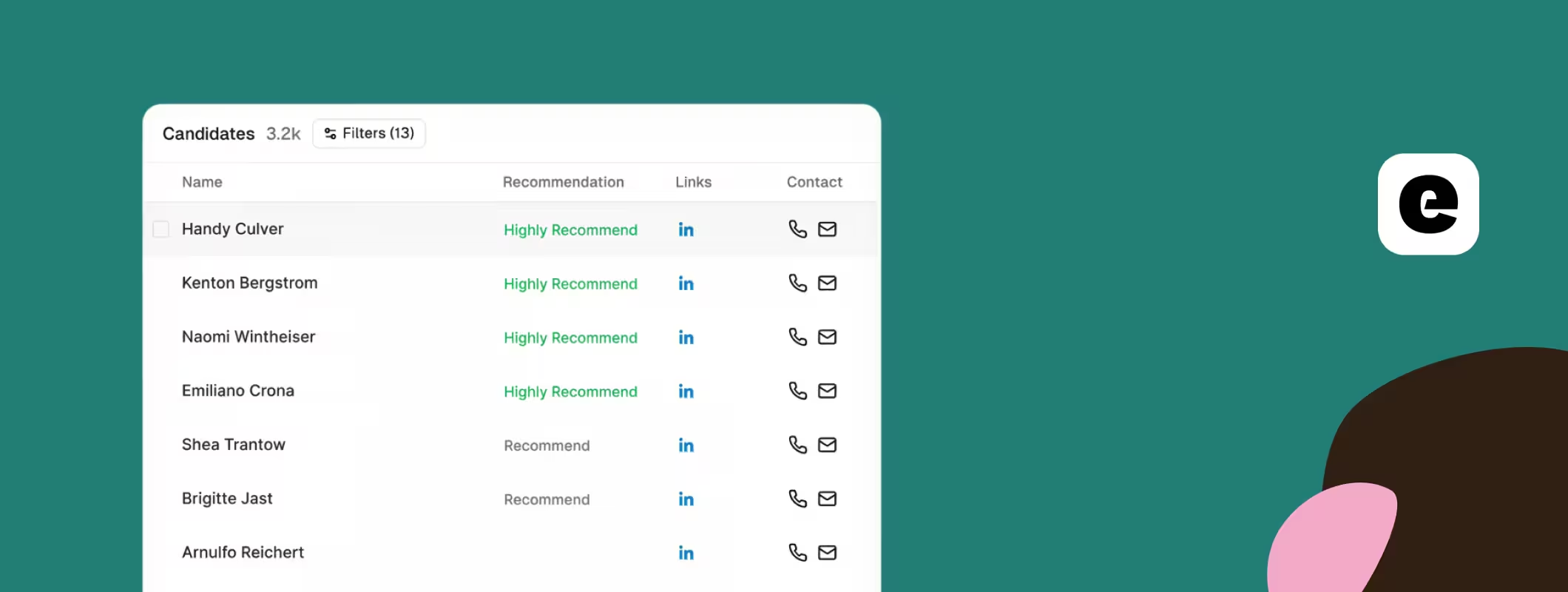

This is the gap that conversational application review is designed to close. Instead of rebuilding a search from scratch or scrolling through a static list, a recruiter can ask the pool a question: show me more junior profiles, find people with agency backgrounds, narrow to candidates in Eastern time.

The criteria evolve. The interface moves with them.

What the data says teams actually want

The clearest signal from Elly's research isn't that teams want AI to make decisions for them. It's the opposite.

From the Q1 2026 survey: 30.4% of recruiting teams cite human review as one of the main things needed to trust AI tools. And 52% see AI's future role in recruiting as supportive rather than autonomous. Only 13% expect fully autonomous screening.

The market isn't asking for AI to replace the review process. It's asking for tools that make human review faster, more flexible, and less likely to miss the right candidates.

Conversational application review is built on that premise. Not scoring instead of human judgment, but giving recruiters a faster, more flexible way to navigate volume so their judgment has better material to work with.

Why teams say AI is helping but can't measure it

Elly's Q1 2026 research found that 49.4% of teams say AI is helping but the impact is still unclear. Only 13.9% say the impact is measurable.

That ambiguity is partly structural. Most AI screening tools rank and filter without much transparency into what was surfaced, what was buried, or why. When a tool's logic isn't visible, it's hard to know whether the candidates worth hiring are actually making it through.

More visibility and control over what the tool is actually doing is consistently one of the things recruiting teams ask for. That's harder to build than a ranking algorithm. It's also what makes AI genuinely useful rather than just fast.

A better model for AI application review

Scoring applications is not the wrong approach. It's an incomplete one when it's treated as the final step rather than the first.

A more complete model looks like this: score the pool to remove clearly unqualified applicants. Then use a conversational interface to work through what's left, asking questions that don't fit neatly into a filter and adjusting criteria as thinking evolves. Keep the human in the process, not just at the end of it.

That's not a more complicated workflow. It's a more honest one. Hiring decisions are made by people, and the tools that support them should be built to help people navigate, not just to rank and hand off.

FAQ

Does AI resume scoring introduce bias?

It can, particularly when tools rank against criteria that reflect assumptions built into the job description. Having a conversational layer on top of the score, where you can query the pool more broadly and adjust what you're looking for, gives recruiters more visibility and control over what's actually being surfaced.

What is the difference between AI resume screening and AI application review?

Screening typically refers to automated scoring or filtering. Application review is a broader process. AI can support it by helping you explore and navigate your pool after the initial screen, not just rank and eliminate.

Can AI replace reading resumes?

No, and the best tools don't try to. The goal is to help you quickly identify the candidates worth reading carefully, not to make the call for you.

What should I do if I get 500 applications for one role?

Let AI score the pool against your job description first to remove clearly unqualified applicants. Then use a conversational interface to work through what's left, asking the kinds of questions that don't fit neatly into a filter and adjusting your criteria as your thinking shifts.

Why do so many teams say AI is helping but can't measure the impact?

Because most AI screening tools rank and filter without much transparency into what was surfaced, what was buried, or why. More visibility and control over that process is consistently one of the things recruiting teams ask for.

Ready to see what your applicant pool actually contains? Try Elly's AI Application Review