AI in Talent Acquisition: Q1 2026 Report on Adoption, Trust, and Hiring Outcomes

AI in Talent Acquisition is moving from adoption to intentional use

In our Q4 2025 report, we found that AI had already become part of everyday Talent Acquisition workflows. This Q1 2026 report looks at what happens next.

Based on survey responses from 100+ Talent Acquisition and People professionals across North America who are directly involved in recruiting and hiring decisions, the clearest divide is no longer between teams using AI and teams avoiding it. It is between teams using AI casually and teams using it intentionally.

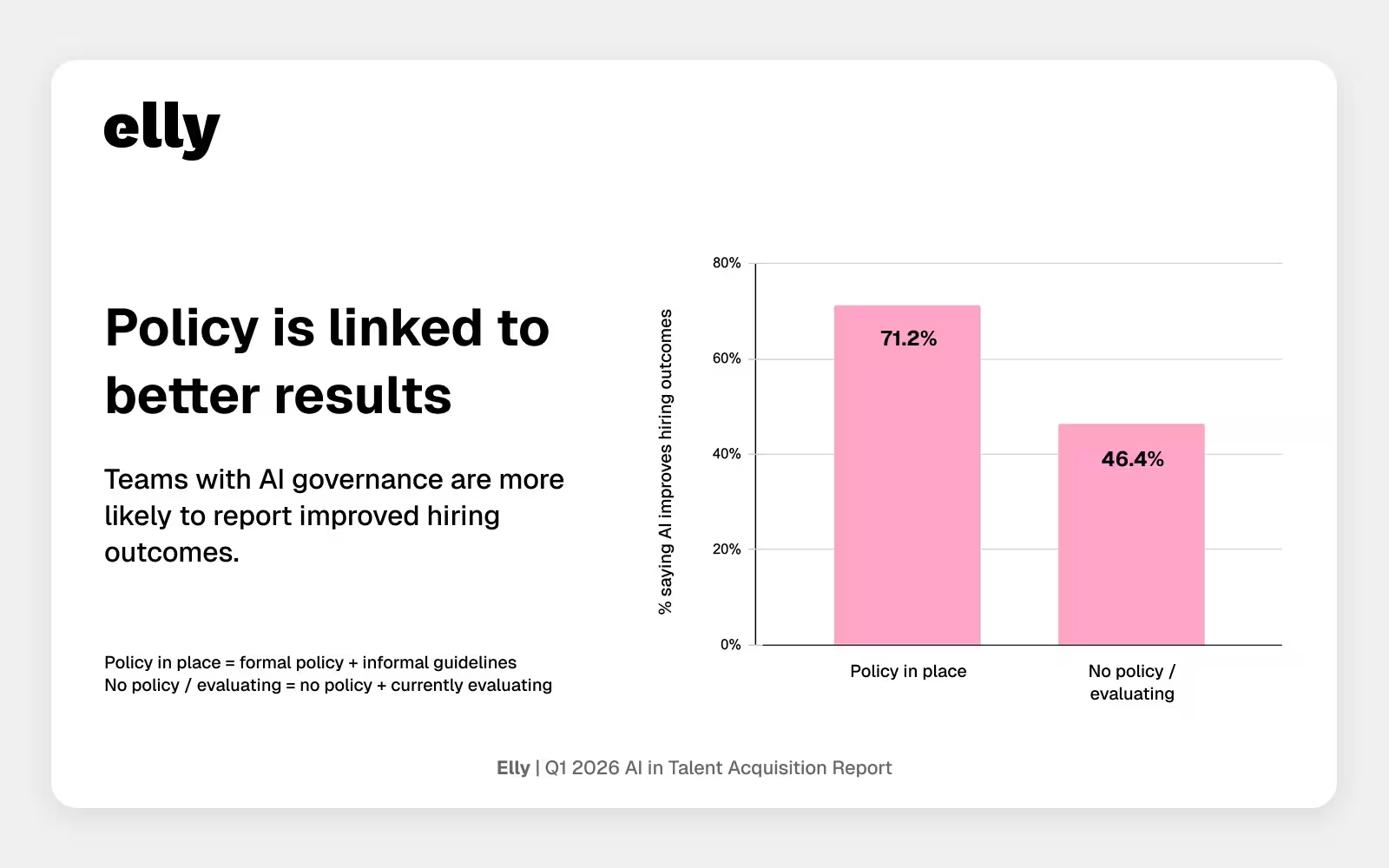

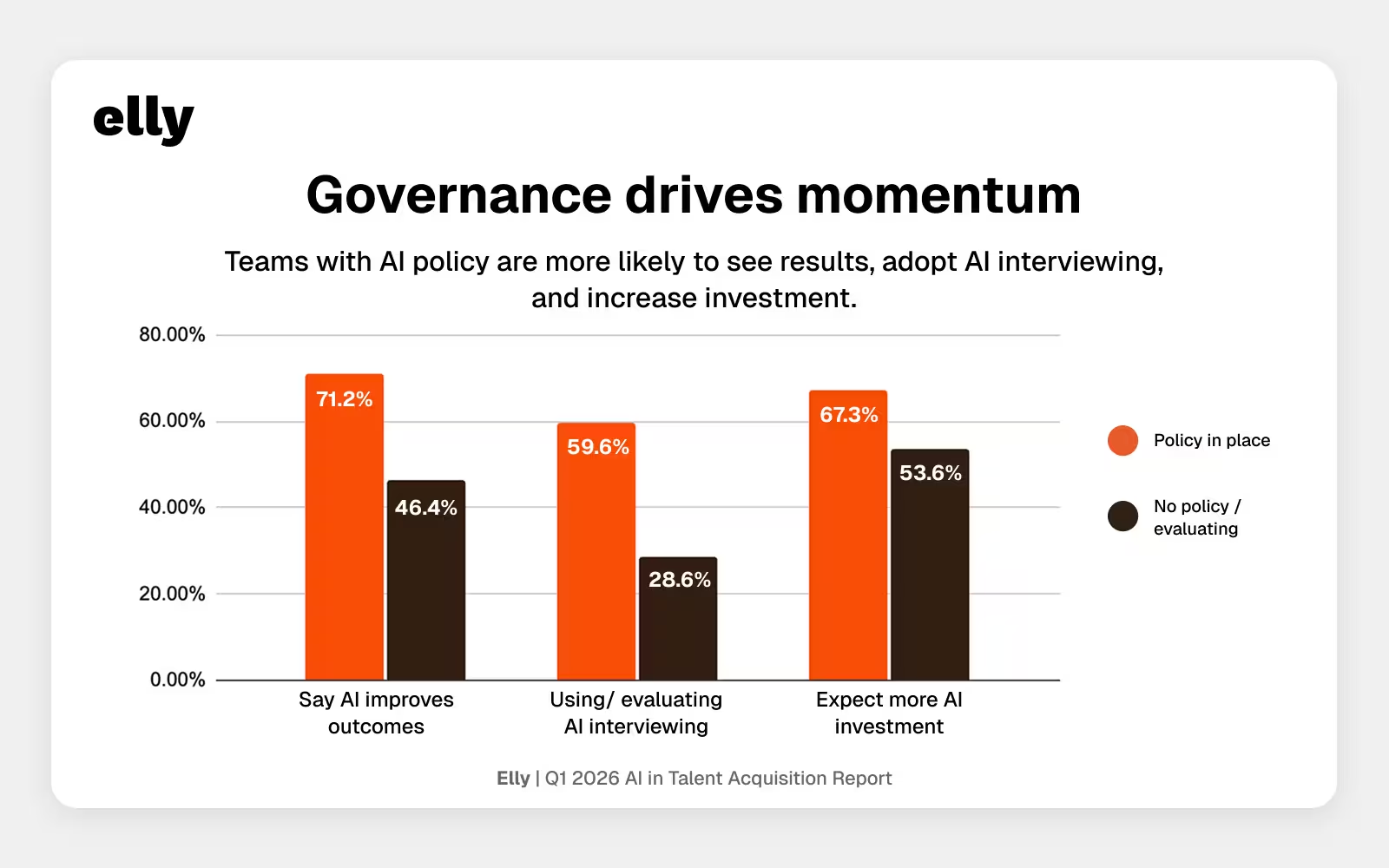

That difference shows up across the data. Teams that use AI more frequently are far more likely to say it is improving hiring outcomes. Teams with some form of AI policy are more likely to report positive results, experiment with advanced use cases, and expect increased investment. Teams exploring AI interviewing also tend to look more mature across the board.

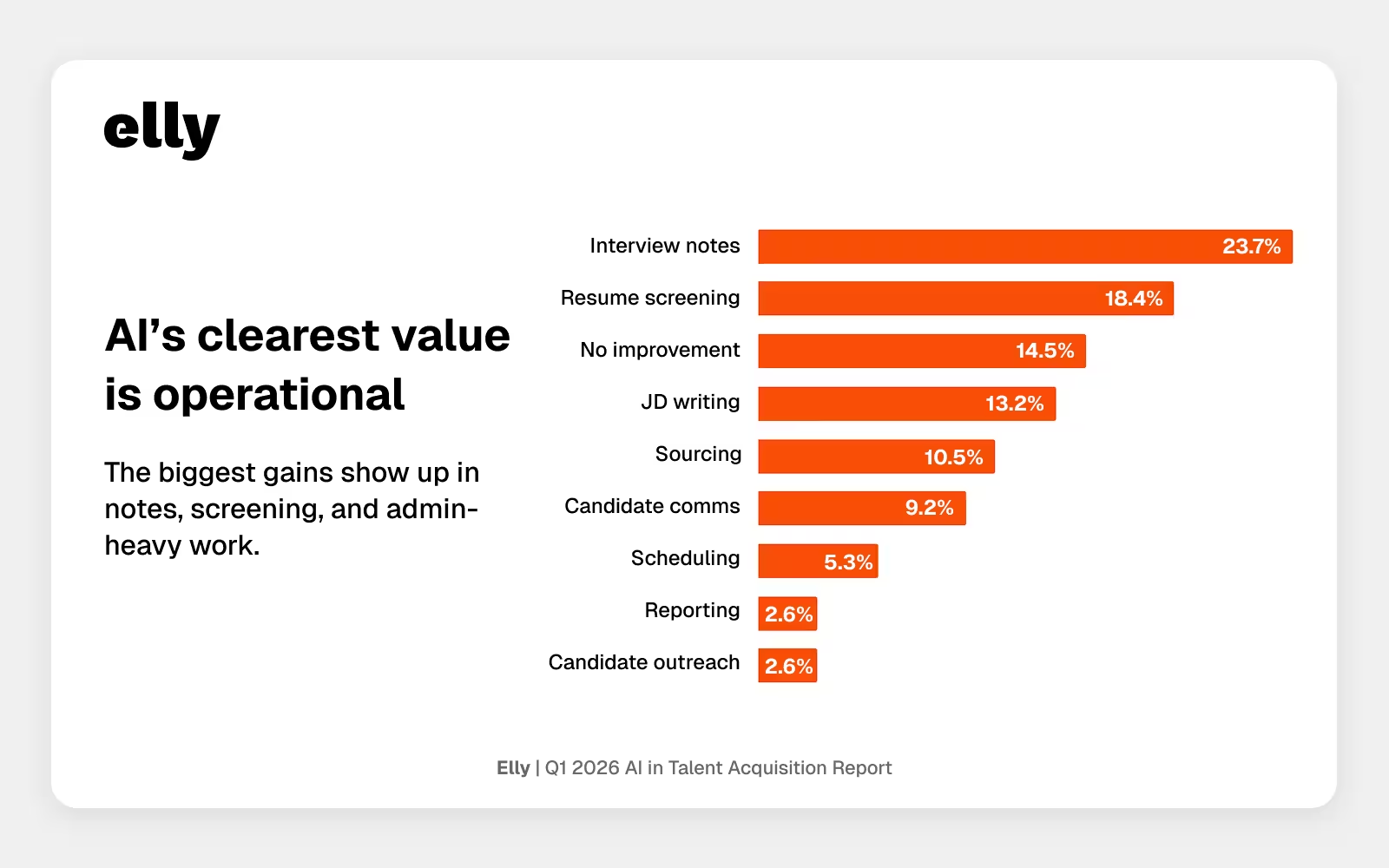

At the same time, trust remains selective. AI is earning the clearest confidence in operational work like interview notes, resume screening, and job description writing. More evaluative use cases still face a higher bar. Many teams believe AI is helping, but far fewer say that impact is measurable. And when respondents describe what would make them trust AI more, they point to proof, human review, and bias controls.

The takeaway from this quarter is not that AI maturity is uniform across Talent Acquisition. It is that the maturity gap is becoming easier to see. The teams moving forward most confidently are not simply adopting more tools. They are using AI more intentionally, with clearer workflows, stronger guardrails, and a better understanding of where it creates value.

Key findings from the Q1 2026 AI in recruiting report

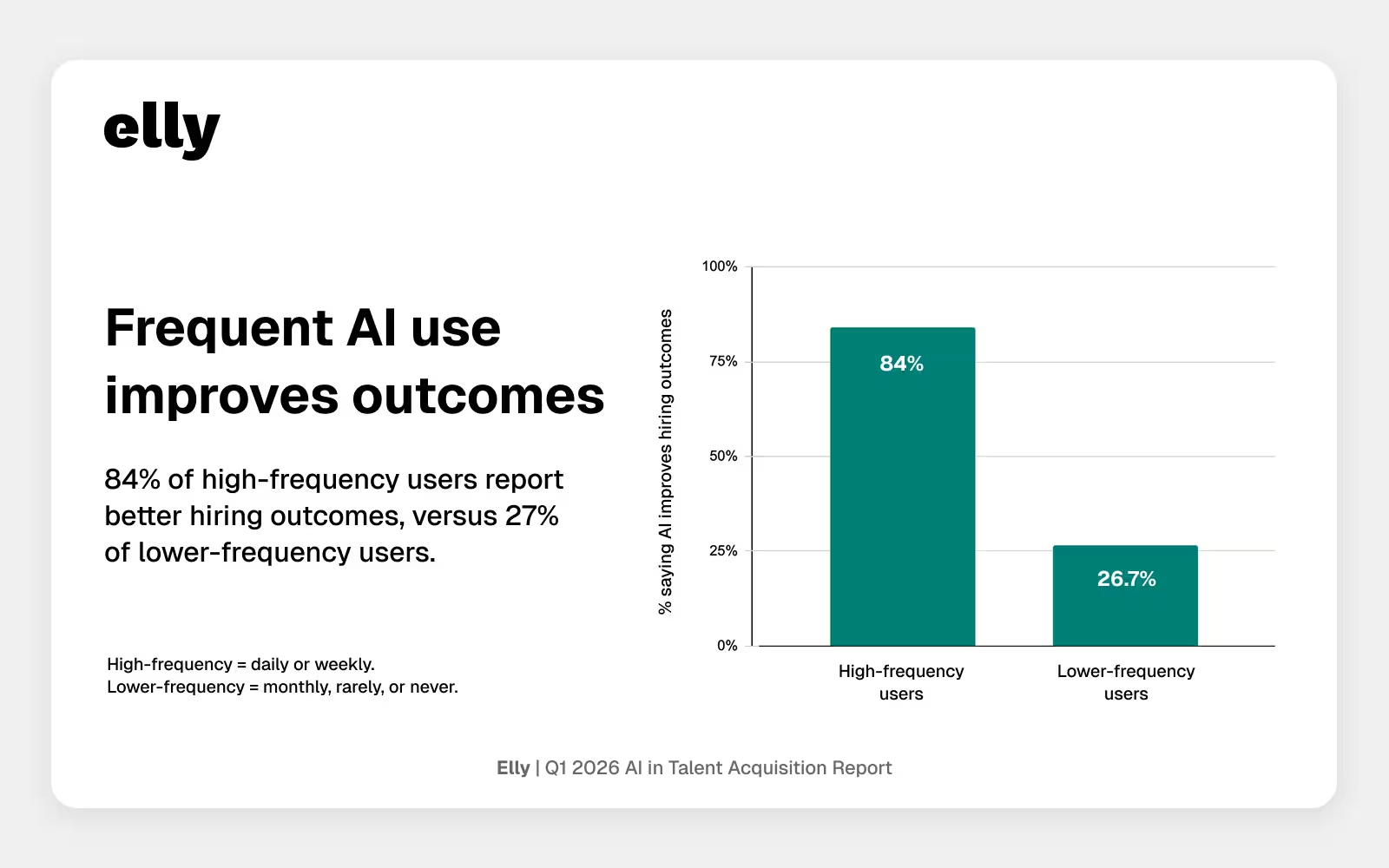

- 84.0% of high-frequency AI users say AI improves hiring outcomes, versus 26.7% of lower-frequency users.

- Teams with formal or informal AI policy are more likely to report positive outcomes than teams with no policy or teams still evaluating one, 71.2% vs. 46.4%.

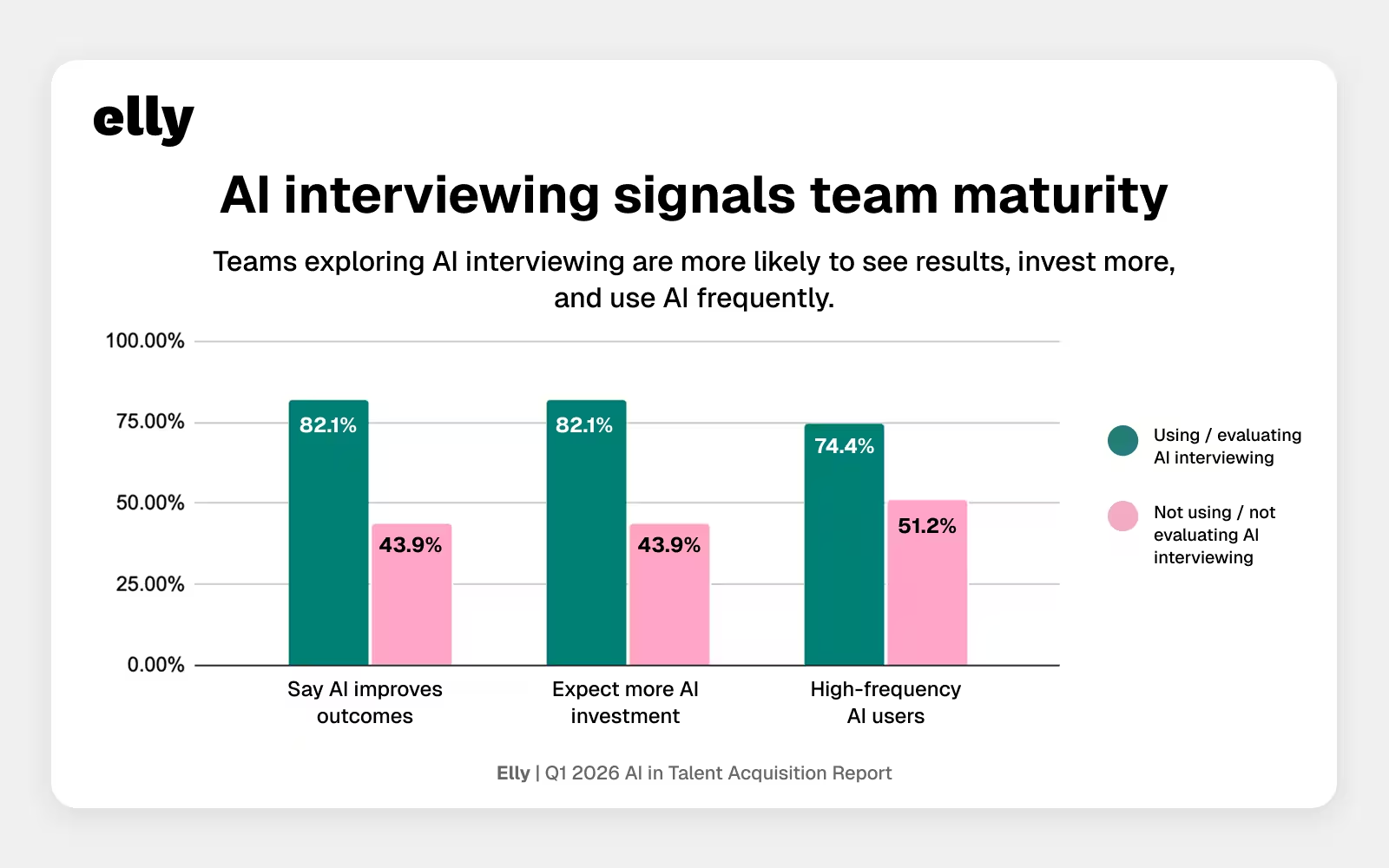

- AI interviewing is emerging as a strong signal of AI maturity. Teams using or evaluating it are more likely to report positive outcomes, increased investment, and higher-frequency AI use.

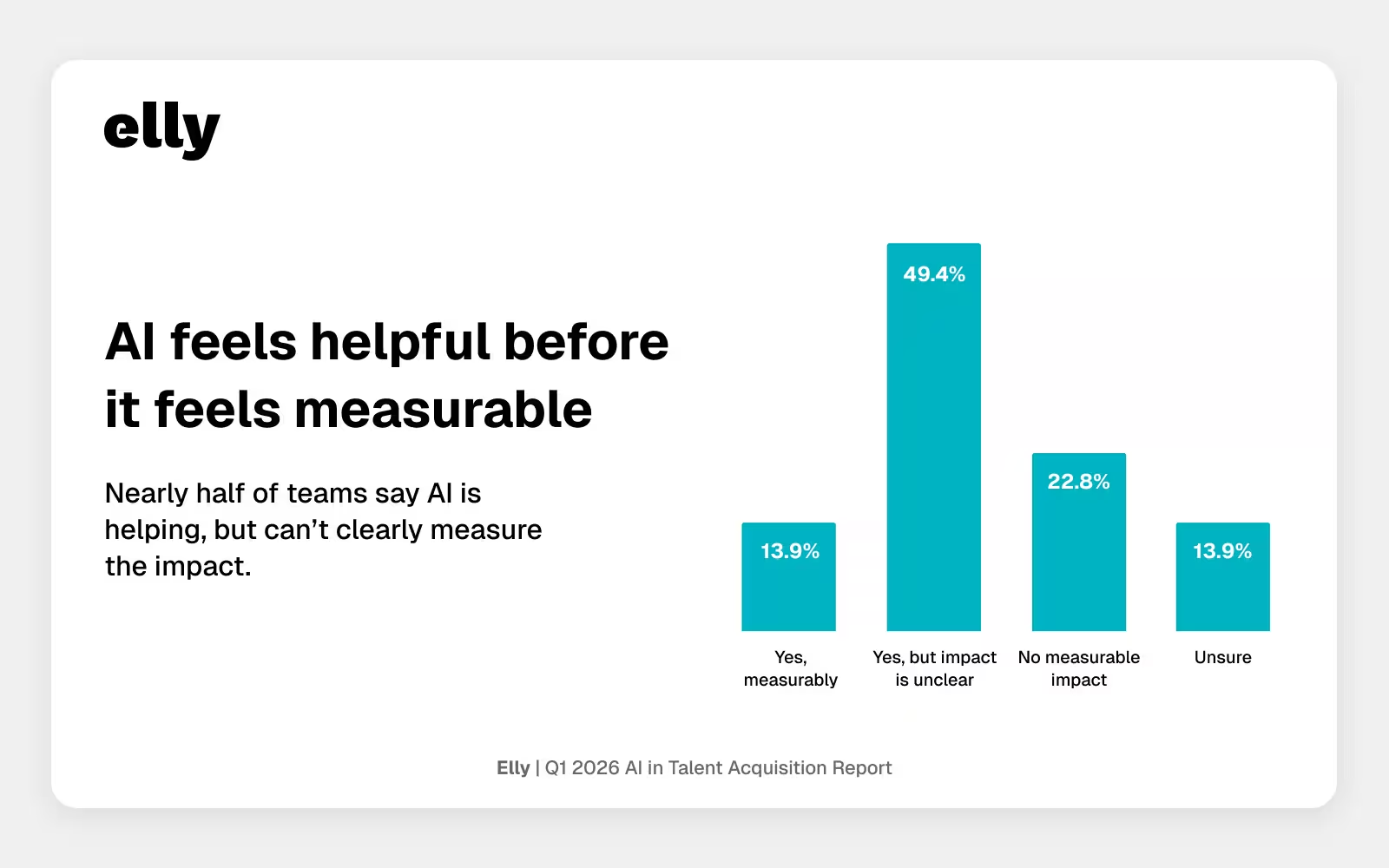

- 49.4% say AI is helping, but impact is still unclear.

Section 1: How are companies using AI in recruiting today?

AI maturity in recruiting is broadening, but still uneven

AI has become common in recruiting workflows. But common does not mean consistent.

Some teams are using AI frequently and seeing clear value. Others are using it more lightly, with less confidence and less evidence of impact. So while adoption is broad, maturity is still uneven.

That matters because the more useful question now is not whether AI is present. It is whether teams have moved beyond experimentation and started using it with consistency, confidence, and structure.

In this survey, maturity shows up as a pattern:

- more frequent use

- stronger reported outcomes

- more willingness to test advanced use cases

- higher expected investment

- clearer policy and governance

AI maturity is not showing up as a single milestone. It is showing up as a cluster of behaviors.

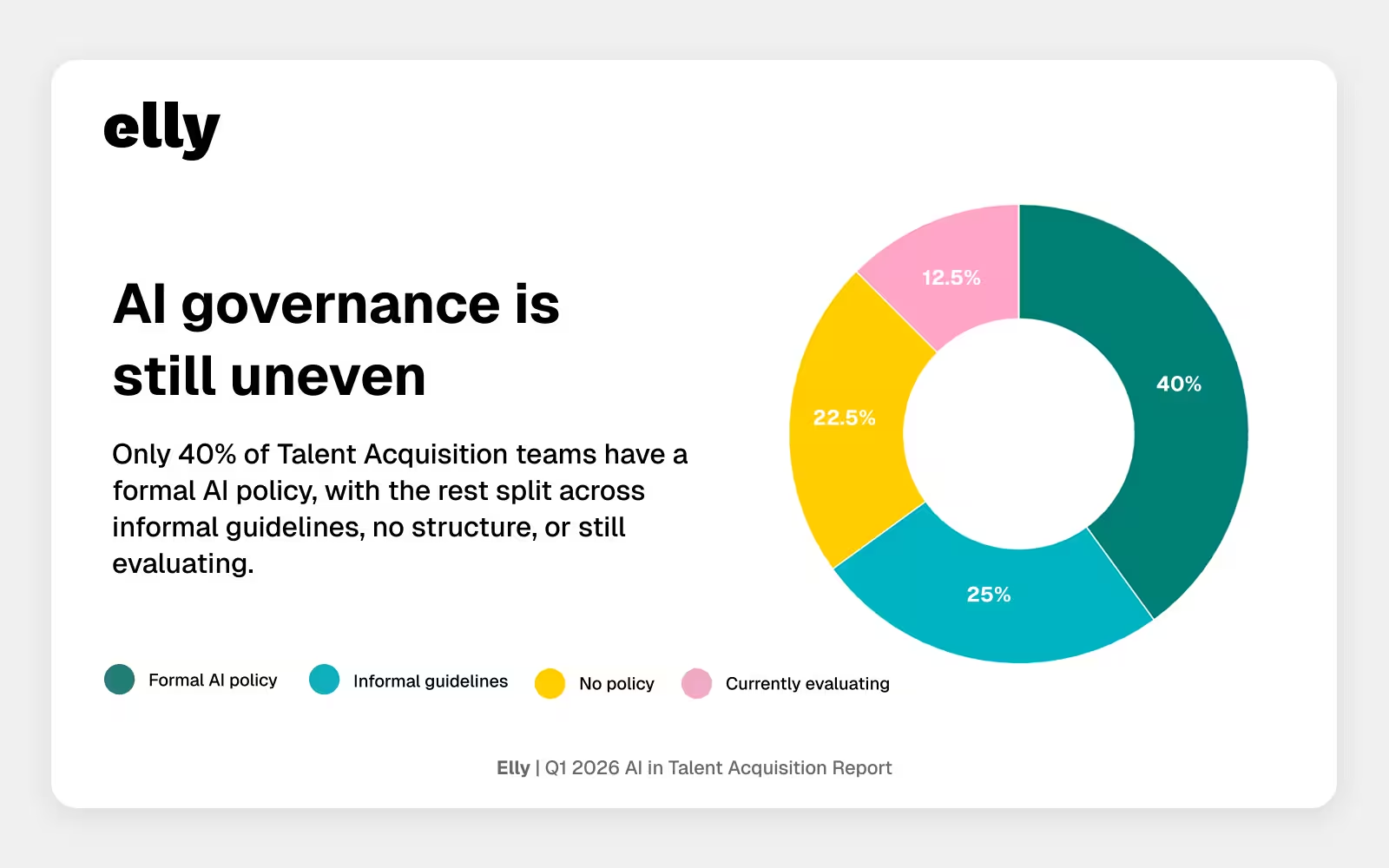

One of the clearest signs of that uneven maturity is governance. Some teams already have formal AI policy in place, while others are still relying on informal guidelines or evaluating their approach.

Where is AI actually improving recruiting workflows?

The clearest gains from AI are still showing up in lower-risk, operational work.

When respondents were asked where AI has most improved their workflow, the top answers were interview notes and summaries, resume screening, and job description writing. These are all tasks where speed, consistency, and administrative relief matter, and where AI can help without acting as the final decision maker.

"In my experience, AI is helping narrow down a massive pool of inbound applications. I don't have enough data yet to say with confidence that AI is improving the outcomes at the end of the day. What I can say is that AI is helping to screen a resume faster than a human could, and bring forward candidate resumes that are in ballpark. When we think about 100's of thousands of applications, AI can drastically help."

Beth Marceau | CEO and Fractional Recruiter @ Fractional Recruiting Co.

That distinction is important. AI is not earning trust evenly across the hiring process. It is earning trust first where the stakes are lower, the work is repetitive, and human review can stay close to the output.

The cross-question data makes that even clearer. Among high-frequency AI users, 84.0% say AI is improving hiring outcomes at least somewhat. Among lower-frequency users, that drops to 26.7%. Among teams using or evaluating AI interviewing, 82.1% say AI is improving outcomes, compared with 43.9% among teams that are not.

Early wins in operational work may be what give teams the confidence to go deeper. But that progression is not automatic. Some use cases still face a much higher trust bar.

Section 2: Does AI actually improve hiring outcomes?

Many teams say AI is helping, but fewer can clearly prove it

One of the clearest patterns in the data is the gap between perceived value and measurable proof.

A large share of respondents say AI is helping, but the impact is still unclear. Far fewer say the impact is measurable. Others report no measurable impact or remain unsure.

"AI is such a buzzword, especially right now. We know much of our recruiting work can be automated and we want it to be as the constant sentiment is doing more with less (less people, less resources, less money), but the actual impact and time saved using AI is very difficult to measure. Time saved in general is a hard metric to accurately quantify. "

Maggie Ark | Senior Talent Acquisition at Bounce

That creates an important tension. AI is delivering enough value that most teams do not want to walk away from it. But many still do not have the evidence, systems, or benchmarks needed to clearly quantify what that value is.

That gap also seems tied to maturity. Teams with some form of AI policy are much more likely to say AI is improving outcomes than teams with no policy or teams still evaluating one, 71.2% vs. 46.4%. The same pattern is even stronger when comparing high-frequency and lower-frequency users, 84.0% vs. 26.7%.

So the divide is not just about whether AI is being used. It is about whether teams have operationalized it enough to recognize and defend the value being created.

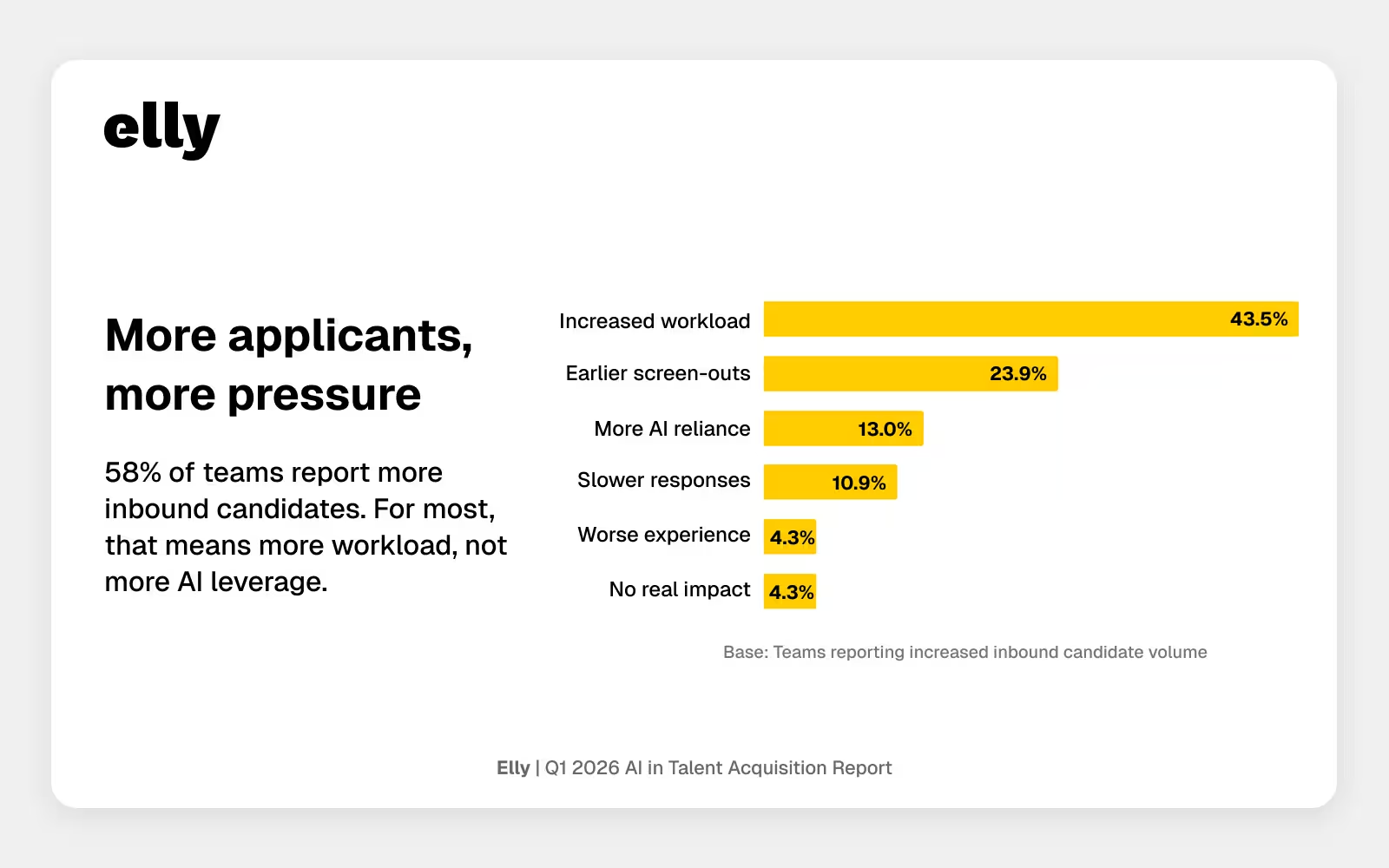

Is AI helping recruiters manage rising candidate volume?

The broader hiring environment continues to put Talent Acquisition teams under pressure.

More than half of respondents reported increased inbound candidate volume. For many, that increase mostly translates into more recruiter workload, not less. Others report more candidates being screened out earlier. A much smaller share say rising volume has led them to rely more heavily on AI tools.

"Higher application volume doesn’t automatically mean more reliance on AI, especially given the legal constraints around making sure AI isn’t making hiring decisions. In practice, it often means recruiters have to get more creative with screening questions while still dealing with review bottlenecks. We had 3,000 applications for a mid-level engineering role in just a few days. Across multiple open roles, there’s simply no way to review that volume as quickly as candidates deserve, so strong candidates can still end up sitting in review longer than they should."

Lindsey Baumler | Manager, Talent Partners at Ontra.ai

Pressure can create demand for efficiency, but it does not automatically create trust in AI. Teams may feel urgency to solve the problem of volume, but they still evaluate AI based on reliability, fairness, and workflow control.

Pain creates demand for solutions. It does not decide which solutions teams are ready to trust.

Section 3: How widely are AI interviewing tools being adopted?

AI interviewing is becoming a real test case for trust

AI interviewing stands out as one of the clearest indicators of where teams are testing the boundaries of trust.

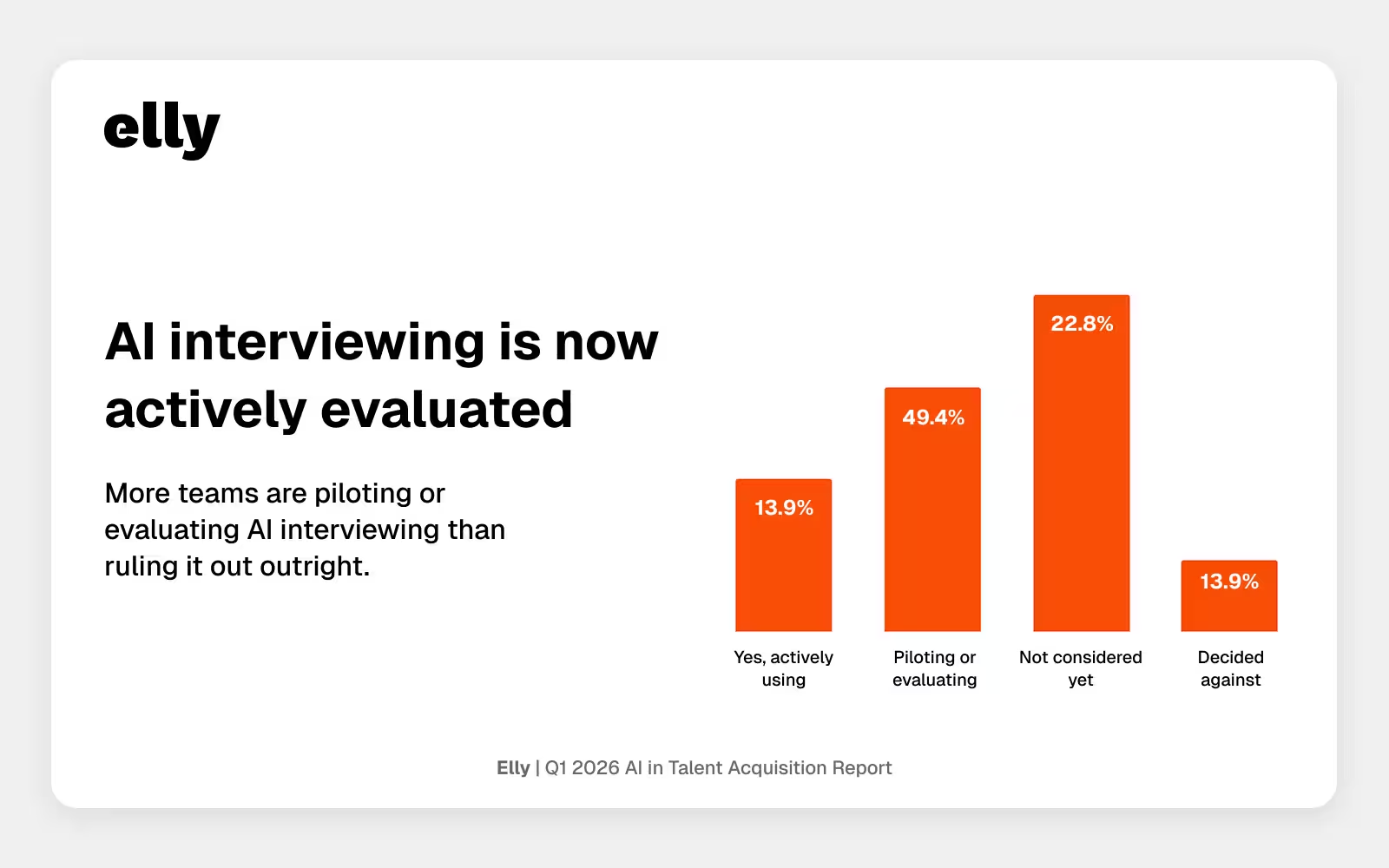

A meaningful share of respondents are already using AI-assisted interviewing or actively piloting it. Many more have not considered it yet, and a smaller group has evaluated it and decided against it.

That shift is easier to see in context. In our Q4 2025 report, only 20% of respondents said they were currently using AI-driven interviewing tools. In Q1 2026, active use remains similar at 19%, but another 30.4% say they are piloting or evaluating AI-assisted interviewing. That suggests the category is moving beyond current users and into broader active consideration.

The teams engaging with it tend to look different from the rest of the market. Among teams using or evaluating AI interviewing, 82.1% expect AI investment to increase, 82.1% say AI is improving hiring outcomes, and 74.4% are high-frequency AI users. Among teams not using or evaluating AI interviewing, each of those figures is materially lower.

That makes AI interviewing more than just another use case. It looks more like a marker of broader AI maturity.

What makes recruiters trust AI hiring tools?

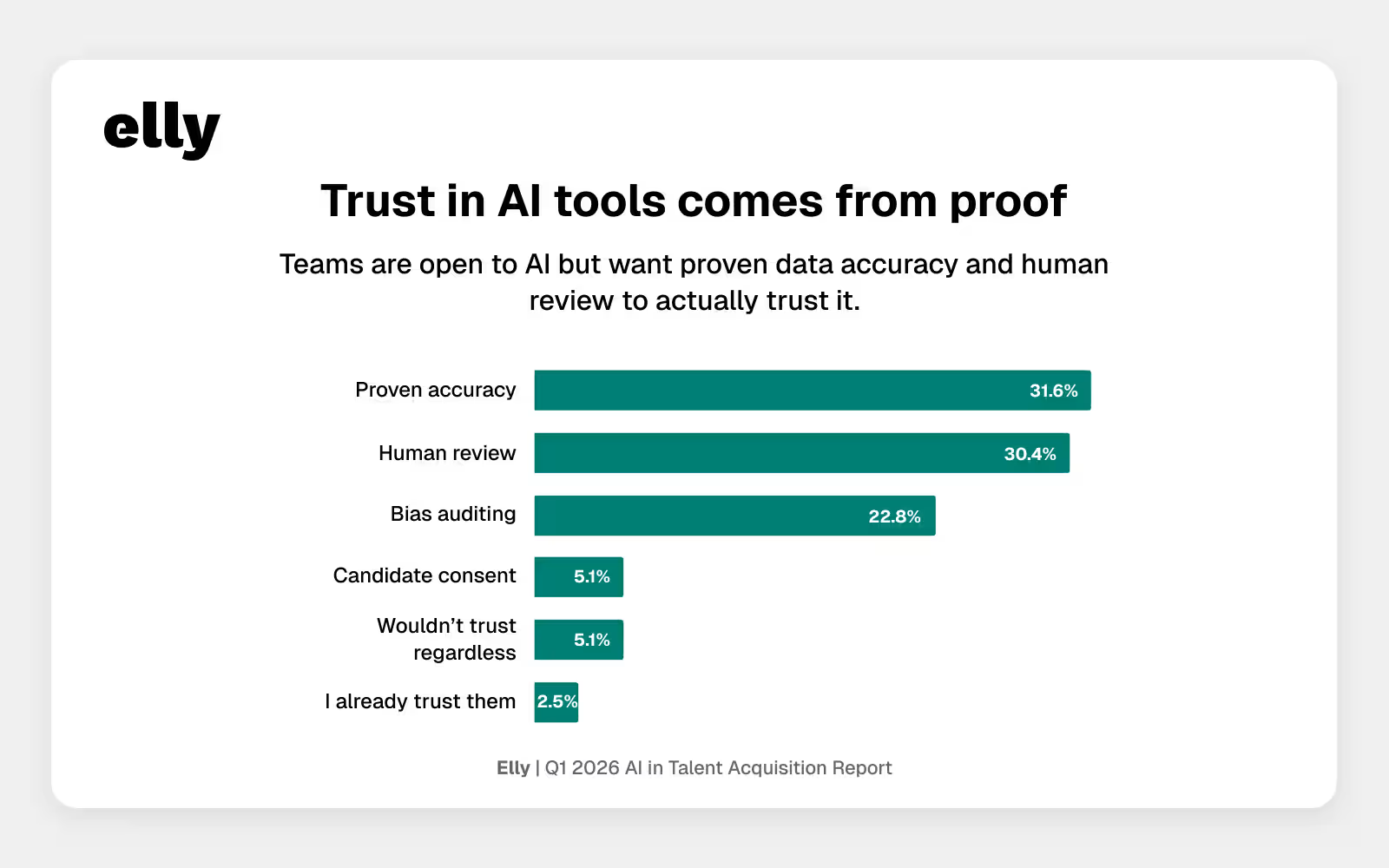

Trust is still a barrier, but it is becoming a more specific one.

When asked what would increase trust in AI recruiting tools, respondents did not point first to convenience or breadth of features. They pointed to proven accuracy data, human review of outputs, and bias audit results.

"The real trust-builder is reducing the manual 'upfront' work through security-approved integrations with an ATS, Drive or file uploads, rather than manual inputs. While human review and bias audits are essential, we still see basic accuracy issues—like AI misidentifying names or orgs, missing critical conversational context or taking notes on unnecessary content—which keeps the manual burden high despite the promise of automation."

Lusely Martinez | Founder & Principal Talent Architect at LAM Talent; Founding Member & Chapter Lead at New York City Talent Collective

That is a useful shift. Trust is becoming less abstract. Teams are getting clearer about what they need in order to move forward: validation, accountability, and evidence that the system behaves as expected.

This is one of the strongest signs that the market is maturing. The question is no longer just whether AI belongs in recruiting. It is what conditions make it reliable enough to use more deeply.

Section 4: Does AI policy improve adoption, outcomes, and investment?

AI governance still matters, but maturity looks broader than policy alone

Governance remains one of the clearest differentiators in the dataset.

Teams with formal AI policies report higher confidence and stronger expectations for budget growth. They are also more likely to be actively using or piloting AI interviewing. That reinforces a broader point: AI maturity is not just about using tools. It is about using them within a system people understand and trust.

But the more interesting story is not just confidence. It is momentum.

Among teams with formal or informal policy, 71.2% say AI is improving outcomes, 59.6% are using or evaluating AI interviewing, and 67.3% expect investment to increase. Among teams with no policy or teams still evaluating one, those numbers are materially lower.

That may be because policy does more than set rules. It can also create the structure and permission teams need to move from ad hoc experimentation to more confident adoption.

"A formal policy is less about the rules and more about the 'permission' it grants employees to stop tinkering in the shadows and start innovating with confidence. The most successful teams realize that a policy is simply the byproduct of doing the hard work of enablement—you can’t legislate curiosity, but you can certainly give it a safe place to land."

Matt Frassica | Director, Global People Business Partner at Corelight

At the same time, policy alone is not the whole story. The strongest signals of maturity appear when governance is paired with frequent use, clearer workflow integration, and willingness to expand into more advanced use cases.

Section 5: What is the future of AI in recruiting over the next 3 years?

Recruiters see AI supporting their work, not replacing it

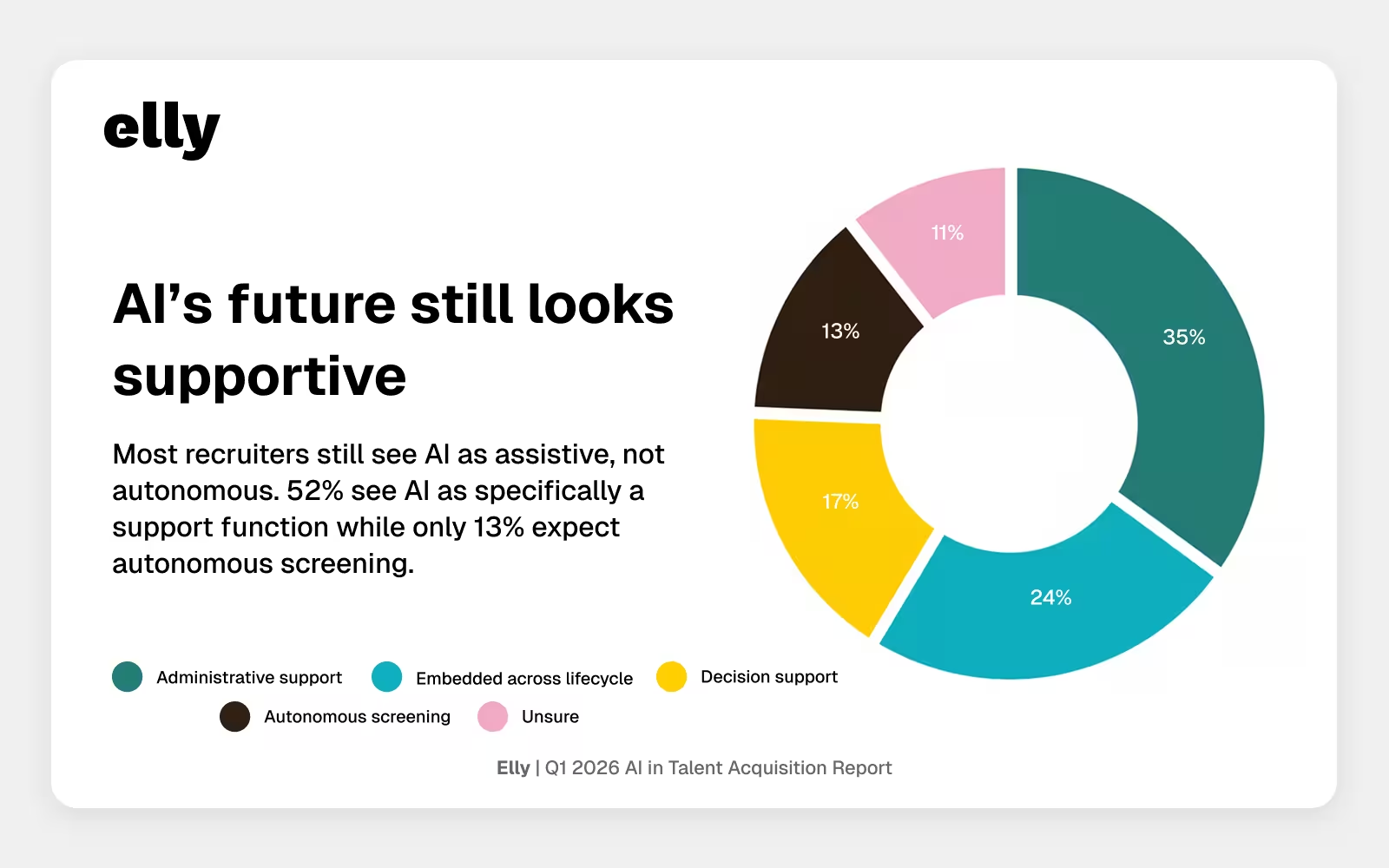

When respondents look three years ahead, most do not describe a future where AI fully takes over recruiting.

Instead, they describe a future where AI is embedded in the workflow in ways that extend human capacity. Administrative support remains the most common expectation. Some expect AI to become fully embedded across the hiring lifecycle. Fewer expect highly autonomous screening or decision-making.

That lines up with the rest of the report. AI is earning trust first in operational tasks. More advanced use cases still require stronger proof, oversight, and governance.

The next phase of AI maturity in Talent Acquisition will not be defined by the number of tools in the stack. It will be defined by how clearly those tools fit into the workflow, how confidently teams can evaluate their impact, and how deliberately they preserve human accountability where it matters most.

The future many teams seem to want is not full automation. It is augmentation with guardrails.

Section 6: How should talent leaders use AI in recruiting more effectively?

What talent leaders should do next

Build around proven operational value

AI is earning trust first in workflows such as note-taking, screening support, and job description drafting. Start where the value is already clear and adoption friction is lower.

Treat proof as a capability, not a nice-to-have

Many teams believe AI is helping, but fewer can clearly measure that impact. The next phase of maturity will require better ways to evaluate workflow gains, hiring outcomes, and risk tradeoffs.

Use governance to create momentum

Clear policies do more than reduce compliance risk. They help teams move faster with more confidence. Governance should enable responsible adoption, not simply slow it down.

Be deliberate with advanced use cases

AI interviewing and other evaluative workflows can unlock meaningful efficiency, but they also raise the trust bar. Treat these categories as strategic pilots with clear guardrails, not lightweight experiments.

Prioritize systems over tool sprawl

The market does not need more disconnected AI features. It needs fewer tools that fit together better. Integration, training, and workflow clarity will matter more than stacking additional point solutions.

The teams that will get the most from AI will not necessarily be the earliest adopters. They will be the most intentional ones.

FAQ: AI in Talent Acquisition

Is AI improving hiring outcomes?

- For many teams, yes, but the picture is uneven. Nearly half of respondents say AI is helping, but the impact is still unclear. Only a smaller share say the impact is clearly measurable. The strongest positive signals come from teams using AI more frequently and teams with clearer governance in place.

What are the most common uses of AI in recruiting?

- The top workflow improvements in this survey were interview notes and summaries, resume screening, and job description writing. These are all operational tasks where AI can save time and improve consistency without taking full ownership of the decision.

How widely are AI interviewing tools being adopted?

- AI interviewing is still early, but it is no longer hypothetical. Some teams are already using it, a larger group is piloting or evaluating it, and many others are still watching from the sidelines. The teams exploring it tend to report stronger overall AI maturity.

What makes recruiters trust AI hiring tools?

- Trust depends most on proven accuracy, human review, and bias audit results. Respondents are looking for evidence and accountability, not just more automation.

Methodology

This analysis is based on a Q1 2026 survey of 103 Talent Acquisition and People professionals across North America.

The survey explored how AI is being used, evaluated, and governed across recruiting workflows, including operational use cases, AI interviewing, trust requirements, policy, and expected future investment. Both quantitative and qualitative responses were collected. Closed-ended questions established benchmarks and directional trends, while open-text responses captured practitioner sentiment and real-world friction.

Percentages are rounded for clarity. For individual questions, percentages are based on respondents who answered that question. Cross-question analysis is based on respondents with non-blank answers to the relevant questions.

About Elly

Elly publishes quarterly research on AI in Talent Acquisition to track as the market evolves. You can read the previous report here or explore how Elly supports more consistent hiring workflows with AI.